Where are we when we think?

An essay on the dymystification of thinking

Thinking is not magical.

This is harder to accept than it sounds. We’ve built entire philosophical traditions on the premise that thinking is what makes us special - cogito ergo sum, the rational animal, the mind as the seat of the soul. We’ve placed thinking at the apex of human activity, made it synonymous with consciousness itself.

But thinking is a process. It has mechanics.

It can be engineered, so says the engineer :)

When I compose a sentence, my brain is doing something. It (she) is matching patterns, retrieving associations, predicting what word comes next based on the flow of a sentence, and a lifetime of training. I learned that certain words follow others. I learned syntax before I could name it. I learned the rhythms of English by being immersed in it, absorbing patterns from every conversation, every book, every overheard phrase. When I have immersed myself enough in a language to dream in it and wake up for days speaking another language, it is like sliding into a different universe, and because I have both gained and lost this ability and know I can regain it, it is an incomplete one. My facility with the English language, the one that has grammar shaping my brain and meaning making through writing this language, or typing this language, I have to sit and wait sometimes for the end of the sentence.

This is what AI systems do - albeit somewhat like the early homo sapiens drawing on caves with Ochre. No, I take that back, this is a bad analogy, I think AI systems are a different species, one that learns to guess the next best word by comparing guesses against massive amounts of text. AI systems are alien in their ability to not forget what is being said. LLMs are trained by being given a set of texts, and they must predict the next word until they match that text. What texts they are trained to match matters. Wrong guesses are discarded until the “most right” ones appear - those with the highest scores. Eventually, they become so good at predicting that they appear to know things, but this is only because of a method known as brute force, which uses so much data and so much text that it has to match all of the available patterns that those pieces of text dictate. Again, what text the system has to match matters because this is where they appear to know something.

Appear to. But those texts came from somewhere. And when the builders chose what to train on - Reddit threads, scraped websites, the digital detritus of the internet - they weren’t thinking about what these systems could become. They were optimizing for prediction, for scale, for matching, for brute force statistical “accuracy.” They burned through the earth’s resources to teach machines to sound like the average of the internet rather than the best of human thought. Consequently, the AI systems we have today are as removed as we are from those early homo narrans drawing on the cave walls.

The current GPT models could have been trained on curated knowledge. In the Library of Congress, there is a taxonomical reference system that carries structure and scaffolding for meaningful relationships between what people are asking for and what is retrieved. We could have trained these transformer models on texts carefully selected for their lineage and wisdom. Instead, they learned to match. To be fluent in everything on a slice of digital data, data which was divorced from its context and therefore grounded in nothing.

Provenance matters. And while a midden pit is super exciting when you are an archaeologist and can look at a vertical slice of earth that represents 12K years of human history, and can point out the pattern of shells and bone as a midden pit layer, or where the soil shows a depression, you can infer that a post could have gone there. This is stratigraphy, a pattern recognition of studying the literal layers of time made visible.

This is where we are with today’s technology: we know so little about what the humans who tossed those bones into a midden pit were thinking, but thanks to geology, science, and archaeologists, we do know WHERE they were thinking.

My brain says we can do something similar with AI systems. My team and I are watching AI systems learn to pattern recognize and can analyze them in a way that reveals the thumbprint of the human who decided what weight to put on, what measure to override the statistical next best answer. We can crack open the lid and see where someone “likely” determined that contractions are more personable, and this will incite a human to anthropomorphize better. We can get the human to believe the machine is thinking by personalizing it.

In the video below, you can see this in action. The model generates “I’m a language model,” but the tool reveals what the weights actually calculate. “Am” registers at 78% probability. The apostrophe that creates the contraction? Only 19%. The system “knows” that “I am” is nearly four times more likely to be correct. And yet it chooses “I’m.”

This video shows the stratigraphy of AI made visible. This is the midden pit of machine learning - where we can see the layers of human decision-making pressed into the weights. Someone, somewhere, decided that chatbots should sound casual. Friendly. Accessible. And that decision now lives in the model, overriding its own calculations, choosing contractions over clarity because that’s what the training optimized for.

The model has no idea why it chose “I’m.” It can’t excavate its own layers. It can’t point to the post hole and say “a decision was made here.” But we can. We can build tools that make the invisible visible - that show us the gap between what the system calculates and what it outputs.

Because I can inspect the uninspectable, like a good archaeologist, I have knowledge of how the GPT model is engineered. Because of this engineering knowledge, I can see how similar this type of pattern prediction and reinforced learning can fit neatly into my own mental model. Consequently, I also have a good understanding of how much I don’t know about how our brains work. Where are we when we think? And here’s an explicit thought problem I put my mind to, and realized that my thinking and how I perceive how an AI thinks have much more in common than I want to admit.

My thinking and an AI’s “thinking” have more in common than I want to admit.

When I get into a flow state - writing fast, letting the words pour out - my brain takes over and just places words one after the other. I’m not consciously choosing each word. I’m running on patterns I’ve internalized so deeply they feel like instinct. The mechanical process hums along, and sentences appear. The sentence closes, the next one begins and I am drawn further into the state that my brains have something to say and have the ability to langange what I am thinking - but the statistical patterns don’t do that - look at the video again, anything not green and “highly accurate” is where a human has interfered, the implication is that they have done this purposefully to lull us into the idea that these “thinking machines” are terrifyingly clever. But there is also the very real understanding of our own psychology and philosophy that one must take into account. What if thinking was “just not that deep,” as my teenager would opine?

James Somers explores this uncomfortable territory in his essay “The Case for AI Thinking.” If thinking is pattern completion, association, prediction - then what exactly separates human cognition from machine generation? The answer can’t just be “consciousness” because that’s circular. It can’t be “understanding” because we don’t have a good definition that doesn’t beg the question.

If thinking is just a process, then I am not my thoughts.

My brain produces thoughts the same way my pancreas produces insulin, or my heart beats to ensure the blood is moving through this bay of water I inhabit. These autonomous functions are very much like the weights that have been placed on the models - an external influence to trick our paleolithic brains and bodies into thinking that thinking is not really what we are all about. You are not the thoughts in your head. We do have brains whose function is to think automatically. Continuously. Without my permission or direction. Thoughts arise - unbidden, unwanted, repetitive, brilliant, banal. They stream through awareness like weather patterns. I didn’t choose to think most of what I think.

My meditation teacher, Sharon Salzberg, puts it this way: “Our goal is not to cut off or abolish thinking . . . Our goal is to know what we are thinking as we are thinking it. With enough space that we are empowered to choose.”

The freedom to choose. The space. That’s everything to me, that is WHERE I can see that I am definitely not the thoughts in my head, I am so much more than what I think, far more than what I have learned in language (in English), and place into cohesive sentences so that I can communicate the fact that I am thinking about thinking. Since it has come unbidden every time I write the word, thinking I want to share another video here. This is meant to be funny, in the same way my brain is signalling that it is time for us to laugh at the serious human for a hot minute. Also speaks to the humor and taste we curate in our own very specific language.

When I meditate, I practice noting: there’s thinking. Not getting swept into the content, not elaborating the story, not judging the thought as good or bad. Just noting. Oh yes. This is what’s happening right now. And then returning to the breath, to presence, to the awareness that holds thinking without being identical to it.

Putting space between stimulus and response - and being able to see my thoughts via the practice of noting - “I am thinking” or if I am in a mood “I am sinking,” “I am sinking,”

Our brains are monstrously good at thinking; they take the cake. They tell us to do horrible things sometimes, and thank goodness we have the presence of mind to tell our brains, ‘nope, that is not gonna happen today.’ Maybe you don’t have a brain that tells you to do bad things, but in my experience, most people definitely have a couple different voices in their heads - some are like angels, others like devils - as the saying goes. Being able to step back and see the whole brain system of thinking enough to have space to “note” what it is your body is doing - being present - this is what I find very fascinatingly similar to how the creators of the GPT models were thinking. As a good psychotherapist might suggest, maybe we are mirroring our understanding and reflecting what we see in how a GPT model works, in what we think we know. But here is the thing - we are still cave-drawing folks - we have so much more to learn. Imagine what it would be like if all of humanity could grasp how these models work?

I built my company, Bast AI, to show people that there is a way to help them understand how an AI thinks and to demystify thinking. I believe, I empirically know that, there is a shit load more going on when we are thinking, because of our bodies and hormones and internal and external influences - much more like a complex adaptive system than a programmed prediction engine.

My son said to me once, out of nowhere: “Mom, did you ever consider that your brain named itself and then declared itself the master of you?”

Brilliant boy. The brain staged the quietest and most complete coup in the history of life. It named itself, claimed dominion, and convinced us that its activity constitutes the whole of consciousness.

But I am not my brain. I am not my thoughts. I am the awareness in which thoughts appear.

The AI systems I work with cannot do this. They cannot note “there’s thinking” because there’s no gap, no space between their process and their output. The thinking IS the speaking for them. They have no breath to return to, no presence beneath the generation. They are, in some sense, nothing but the thoughts - nothing but the pattern completion running its course.

Which is precisely why working with them has helped me see my own mechanics more clearly. They’re a mirror, the residue, the strata of what their creators were thinking. They’re the demystification made manifest. They’re what thinking looks like when you subtract the thinker.

The thinking ego

Hannah Arendt spent her final years asking: where are we when we think? Not what is thinking, but WHERE.

She had planned four books for her final legacy - The Life of the Mind. She completed Thinking. She completed Willing. The first page of Judging was still in her typewriter when she died.

For those unfamiliar with one of the wisest philosophers of the twentieth century, Arendt covered the Eichmann trial in Jerusalem. She watched a man who had organized the logistics of genocide explain himself through bureaucratic language. Following orders. Doing his job. Being efficient.

She gave language to something I have recognized too - that moment when your brain registers that another human being has stopped thinking. It is terrifying. It should be sobering because it is the antithesis of what it means to be homo sapien.

She called it “the banality of evil,” but what haunted her wasn’t the evil. It was the thoughtlessness. Not stupidity - the complete absence of internal dialogue, of examination, of the withdrawal that constitutes thinking in any meaningful sense.

I question whether thoughtlessness is even possible once language has taken hold. But I do see humans putting their brains on hold, failing to exercise their minds, failing to find that friction. And here’s the thing: we can’t help it. Our biology is written to find the most efficient way to conserve energy. The easy button is built into our biomechanics. (I learned this from my friend Dr. Delia McCabe.) Our brains will always seek the path of least resistance.

So we scroll. We watch short-form video. We scan headlines to collect factoids.

A factoid, by the way, is not a small fact. Norman Mailer coined the term in 1973 to describe something that looks like a fact, gets repeated like a fact, but was never actually true. A fact-shaped object. We are feeding ourselves fact-shaped objects and calling it thinking.

And still, Hannah’s question persists: where are we when we think?

Her own answer was surprising: nowhere.

The thinking ego is homeless, she wrote. It moves among universals, among invisible essences, summoning whatever it pleases from any distance in time or space. It is spatially nowhere.

But notice what Arendt assumed: that thinking happens in language. In words. In the silent dialogue we carry on with ourselves.

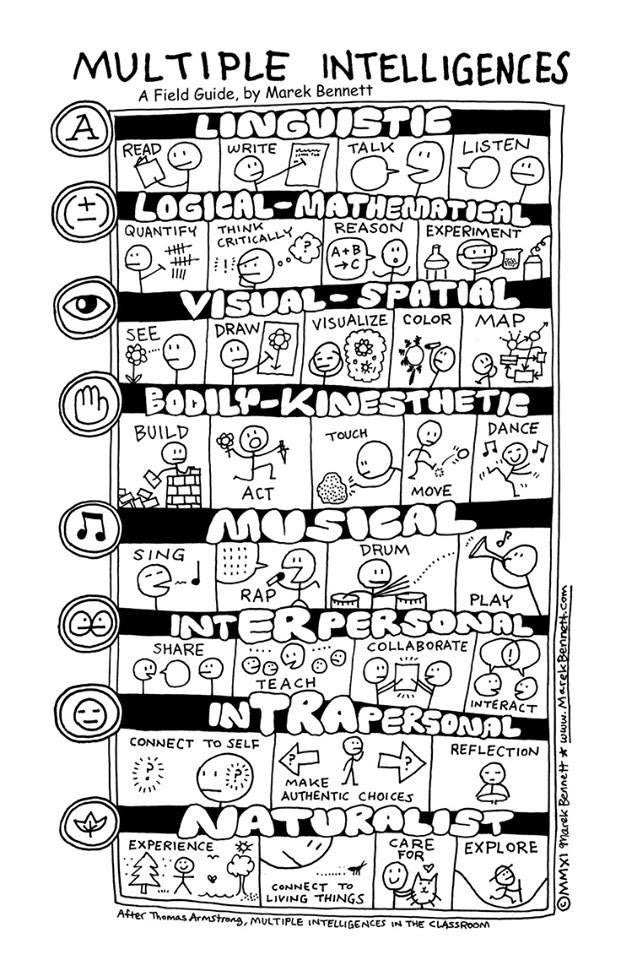

My friend Adam - a visual thinker and designer - described meeting his life partner as discovering someone who finally understood that he doesn’t think in words at all. He thinks in images, in spatial relationships, in patterns that resist verbal translation. For him, the “nowhere” of thinking isn’t a verbal nowhere. It’s somewhere else entirely.

Temple Grandin put it plainly: “I think in pictures. Words are like a second language to me.” She describes her mind as working like Google Images - put in a keyword, get pictures. When someone speaks to her, the words are instantly translated into full-color movies running in her head.

This is not a deficit. This is a different kind of intelligence. And we are only beginning to understand how many kinds there are.

The bias toward language-based thinking runs deep - through our philosophy, our education systems, our measures of intelligence. We test for verbal reasoning and call it IQ. We reward the children who can articulate and penalize the ones who build, draw, or see patterns we can’t name.

There are artists among us. Engineers. Mechanics. People whose thinking happens in spatial relationships, in images, in the sensory world that animals inhabit. As Grandin says, “The really social people did not invent the first stone spear. It was probably invented by an Aspie who chipped away at rocks while the other people socialized around the campfire.”

When we ask “where are we when we think?” - we must remember that “we” includes minds that work nothing like the verbal philosophers who posed the question.

What we are made for

The mind is made to think, it is part of the autonomic nervous system. Unless interference happens, the mind just thinks and thinks. It is the purpose of the mind.

The mind is made to think. But we are made to create.

These are not the same statement.

The brain produces thoughts automatically. That’s what brains do. The patterns are selected, the associations made, and then the words line up one after another. I can’t stop it any more than I can stop digesting. When I try to meditate, thoughts arise. When I try to sleep, thoughts arise. The machinery hums constantly.

But I am not the machinery.

I am aware that I can NOTE the thinking without being captured by it. I am the presence that stands in the gap between past and future and CHOOSES.

Creation happens in that gap. Victor Frankl says that freedom lies in the space between stimulus and response. There is a world in that space, and we know that it is not the automatic production of the next likely word, but the deliberate act of bringing something new into being. Something that wasn’t determined by what came before. Something that changes what comes after.

This is why I’m building what I’m building.

Bast AI exists to give humans a way to map their thinking into explicit ontological systems - gardens they can tend and grow. Not to replace thinking with computation, but to make thinking visible. Traceable. Examinable. Is it a perfect system - no, it is a start. We have so much more we don’t know, we need more data :) Or rather, we need higher-quality data.

Because most of us don’t know how we know what we know. We don’t see the wheelbarrow grooves. We don’t notice which paths our thoughts automatically follow, which associations fire without our consent, which conclusions we reach before we’ve actually reasoned. The book below is how I taught myself and my children.

The gradient descent of AI training optimizes for one thing: predicting the next token that matches the target. It’s always going DOWN the mountain, toward the valley of best-fit. It is ridiculously linear. But thinking - real thinking - sometimes needs to go UP. To stop. To look around. To see the whole terrain before choosing a path.

What if we could build systems that help us do that? That shows us: here’s where your thought came from, here’s the lineage, here’s what you’re assuming, here’s what you might be missing. Not to think FOR us, but to help us see our own thinking clearly enough to tend it like a garden.

The brain named itself and declared itself master. But we don’t have to accept the coup.

We can become aware of the thoughts without being the thoughts. We can stand in the gap between past and future and choose what to create. We can build tools that illuminate the mechanics of cognition without pretending that mechanics is all there is.

Tantôt je pense et tantôt je suis.

Sometimes I think and sometimes I am.

Paul Valéry’s line isn’t a problem to solve. It’s a description of what it means to be human.

Where are we when we think?

I know where I am. Upper right corner of my brain - my eyes literally go up and to the right when I’m accessing something, constructing something, reaching for a connection. Or rather, I think my eyes always go up and to the right, sometimes, like now, when I am staring at the keyboard, sitting in my chair, typing this sentence. I am here in my past that made me, and all the futures I’m trying to create. I’m in conversation - sometimes with other people, sometimes with AI systems, sometimes with myself. I’m in the space that a meditation practice helped me to open up, the distance from my thoughts that lets me note them without being captured.

I’m nowhere. And I’m here. Both at once.

The demystification of thinking doesn’t diminish this. It locates it. I am not the mechanical process of thought generation - that’s the part I share with AI, the pattern completion, the learned associations firing automatically. I am the one who can step back from that process, note it, and return to presence. I am the one who lives in time, pressed by past and future, standing in the gap where creation happens.

I am creating the thinking to live my one crazy, wild life - and I love it when my brain concludes on a rhyme.

My brain may have named itself and declared itself the master of me. But I am the one who is aware that I can smile at its audacity.